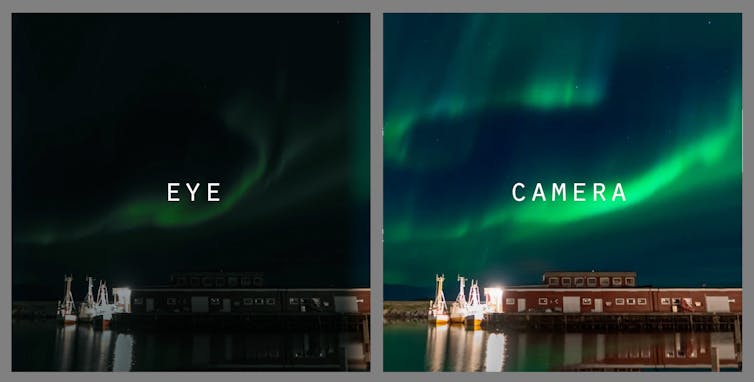

Smartphone cameras have significantly improved in recent years. Computational photography and AI allow these devices to capture stunning images that can surpass what we see with the naked eye. Photos of the northern lights, or aurora borealis, provide one particularly striking example.

If you saw the northern lights during the geomagnetic storms in May 2024, you might have noticed that your smartphone made the photos look even more vivid than reality.

Auroras, known as the northern lights (aurora borealis) or southern lights (aurora australis) occur when the solar wind disturbs Earth’s magnetic field. They appear as streaks of color across the sky.

What makes photos of these events even more striking than they appear to the eye? As a professor of computational photography, I’ve seen how the latest smartphone features overcome the limitations of human vision.

Your eyes in the dark

Human eyes are remarkable. They allow you to see footprints in a sun-soaked desert and pilot vehicles at high speeds. However, your eyes perform less impressively in low light.

Human eyes contain two types of cells that respond to light – rods and cones. Rods are numerous and much more sensitive to light. Cones handle color but need more light to function. As a result, at night our vision relies heavily on rods and misses color.

The result is like wearing dark sunglasses to watch a movie. At night, colors appear washed out and muted. Similarly, under a starry sky, the vibrant hues of the aurora are present but often too dim for your eyes to see clearly.

In low light, your brain prioritizes motion detection and shape recognition to help you navigate. This trade-off means the ethereal colors of the aurora are often invisible to the naked eye. Technology is the only way to increase their brightness.

Taking the perfect picture

Smartphones have revolutionized how people capture the world. These compact devices use multiple cameras and advanced sensors to gather more light than the human eye can, even in low-light conditions. They achieve this through longer exposure times – how long the camera takes in light – larger apertures and increasing the ISO, the amount of light your camera lets in.

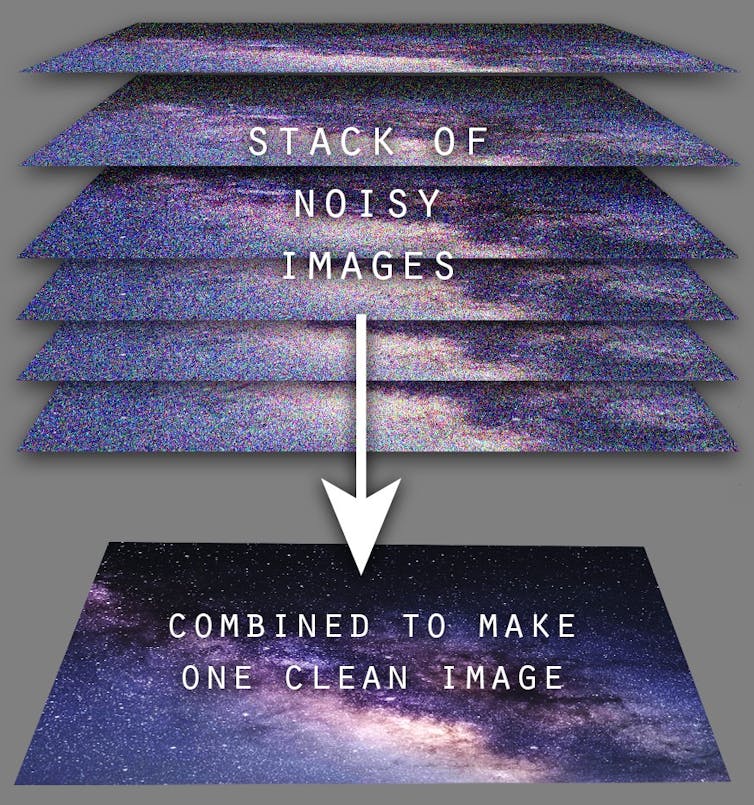

But smartphones do more than adjust these settings. They also leverage computational photography to enhance your images using digital techniques and algorithms. Image stabilization reduces the camera’s shakiness, and exposure settings optimize the amount of light the camera captures.

Multi-image processing creates the perfect photo by stacking multiple images together. A setting called night mode can balance colors in low light, while LiDAR capabilities in some phones keep your images in precise focus.

LiDAR stands for light detection and ranging, and phones with this setting emit laser pulses to calculate the distances to objects in the scene quickly in any kind of light. LiDAR generates a depth map of the environment to improve focus and make objects in your photos stand out.

Artificial intelligence tools in your smartphone camera can further enhance your photos by optimizing the settings, applying bursts of light and using super-resolution techniques to get really fine detail. They can even identify faces in your photos.

AI processing in your smartphone’s camera

While there’s plenty you can do with a smartphone camera, regular cameras do have larger sensors and superior optics, providing more control over the images you take. Camera manufacturers like Nikon, Sony and Canon typically avoid tampering with the image, instead letting the photographer take creative control.

These cameras offer photographers the flexibility of shooting in raw format, which allows you to keep more of each image’s data for editing and often produces higher-quality results.

Unlike dedicated cameras, modern smartphone cameras use AI while and after you snap a picture to enhance your photos’ quality. While you’re taking a photo, AI tools will analyze the scene you’re pointing the camera at and adjust settings such as exposure, white balance and ISO, while recognizing the subject you’re shooting and stabilizing the image. These make sure you get a great photo when you hit the button.

You can often find features that use AI such as high dynamic range, night mode and portrait mode, enabled by default or accessible within your camera settings.

AI algorithms further enhance your photos by refining details, reducing blur and applying effects such as color correction after you take the photo.

All these features help your camera take photos in low-light conditions and contributed to the stunning aurora photos you may have captured with your phone camera.

While the human eye struggles to fully appreciate the northern lights’ otherworldly hues at night, modern smartphone cameras overcome this limitation. By leveraging AI and computational photography techniques, your devices allow you to see the bold colors of solar storms in the atmosphere, boosting color and capturing otherwise invisible details that even the keenest eye will miss.

Douglas Goodwin, Visiting Assistant Professor in Media Studies, Scripps College

This article is republished from The Conversation under a Creative Commons license. Read the original article.